Large language models (LLMs) have rapidly evolved from experimental research tools into mission-critical components of modern software products. However, out-of-the-box foundation models rarely deliver optimal performance for specialized domains such as healthcare, finance, legal services, education, or customer support. This gap has driven the rise of LLM fine-tuning platforms—tools and services designed to help organizations customize powerful base models for highly specific use cases.

TLDR: LLM fine-tuning platforms allow organizations to adapt large language models to specialized domains, tasks, and workflows. These platforms simplify data preparation, training, evaluation, and deployment without requiring deep machine learning expertise. They support methods such as supervised fine-tuning, parameter-efficient tuning, and reinforcement learning. Choosing the right platform depends on data sensitivity, scalability, infrastructure, and the complexity of the use case.

As more companies integrate AI into their products and internal operations, fine-tuning platforms have become essential infrastructure. They reduce technical complexity while enabling businesses to build smarter, more reliable AI systems.

Why Fine-Tuning Matters for Specialized Applications

General-purpose LLMs are trained on vast amounts of public data, which gives them broad knowledge but not necessarily domain precision. For example, a legal tech platform requires highly structured contract interpretation, while a healthcare assistant must adhere to strict clinical terminology and compliance standards.

Fine-tuning allows organizations to:

- Improve domain accuracy by training on proprietary datasets.

- Align outputs with brand voice, tone, and company policies.

- Reduce hallucinations in specialized contexts.

- Ensure regulatory compliance in sensitive industries.

- Optimize task performance for workflows such as summarization, classification, or data extraction.

Instead of building a model from scratch, which requires enormous computational resources and expertise, fine-tuning platforms build upon pre-trained foundation models to create domain-adapted versions efficiently.

Core Capabilities of LLM Fine-Tuning Platforms

Modern fine-tuning platforms combine multiple components into unified systems. They typically provide:

1. Data Preparation and Management

High-quality data is critical for successful model customization. Platforms often include:

- Dataset upload and secure storage

- Annotation and labeling tools

- Data validation and cleaning features

- Version control for datasets

Some platforms also offer synthetic data generation to augment limited datasets, particularly useful for rare or sensitive scenarios.

2. Multiple Fine-Tuning Techniques

Rather than offering a single training method, advanced platforms support a range of approaches:

- Supervised Fine-Tuning (SFT): Training the model on curated input-output pairs.

- Parameter-Efficient Fine-Tuning (PEFT): Techniques like LoRA that modify a small subset of parameters.

- Reinforcement Learning from Human Feedback (RLHF): Improving response quality through feedback loops.

- Instruction tuning: Adapting models to follow task-specific prompts more reliably.

This flexibility allows organizations to balance performance improvements with computational cost.

3. Evaluation and Monitoring

Evaluation is often where many AI projects fail. Fine-tuning platforms typically provide:

- Predefined benchmark metrics

- Custom evaluation pipelines

- Human-in-the-loop testing tools

- Bias and toxicity detection

These tools ensure that fine-tuned models perform as expected before deployment.

4. Deployment and Lifecycle Management

Beyond training, platforms often include:

- API endpoints for production use

- Scalable inference infrastructure

- Continuous retraining workflows

- Usage analytics and performance tracking

This end-to-end approach reduces friction between development and real-world implementation.

Types of LLM Fine-Tuning Platforms

Not all platforms are identical. They can generally be categorized into three major groups.

1. Cloud-Based Managed Platforms

These services operate entirely in the cloud and provide user-friendly interfaces. They are ideal for teams that:

- Lack deep machine learning infrastructure expertise

- Need rapid experimentation

- Prefer managed security and scaling

Cloud platforms typically handle compute provisioning, scalability, and monitoring automatically.

2. Open-Source Frameworks

Some organizations prefer open-source ecosystems that provide flexibility and transparency. These frameworks often require:

- Internal infrastructure management

- MLOps engineering support

- Technical expertise in distributed training

While they demand more effort, they offer maximum control and customization.

3. Enterprise AI Platforms

Large-scale enterprises often adopt platforms that integrate:

- Data governance policies

- Role-based access controls

- Audit trails and compliance reporting

- Private cloud or hybrid deployments

These platforms emphasize security, traceability, and integration with existing business systems.

Use Cases Across Industries

Fine-tuning platforms unlock AI applications that go far beyond generic chatbots. Across industries, customized models are delivering measurable value.

Healthcare

Healthcare providers use fine-tuned LLMs for:

- Clinical documentation summarization

- Medical coding assistance

- Patient triage chat systems

- Research literature analysis

Domain-trained models can better understand medical terminology and reduce potentially harmful inaccuracies.

Finance

Financial institutions rely on fine-tuning to:

- Analyze earnings calls

- Detect fraud patterns

- Automate compliance reporting

- Provide wealth management recommendations

Customization improves both speed and regulatory alignment.

Legal Services

Law firms and legal tech companies use fine-tuned models to:

- Draft and review contracts

- Extract key clauses

- Summarize case law

- Support discovery processes

Fine-tuned models can be trained on jurisdiction-specific language, improving precision and reliability.

Customer Support

Companies customize LLMs to power support agents that:

- Reflect brand tone and style

- Access proprietary knowledge bases

- Automate common queries

- Escalate complex tickets intelligently

Unlike general chatbots, these systems are aligned to specific workflows and internal processes.

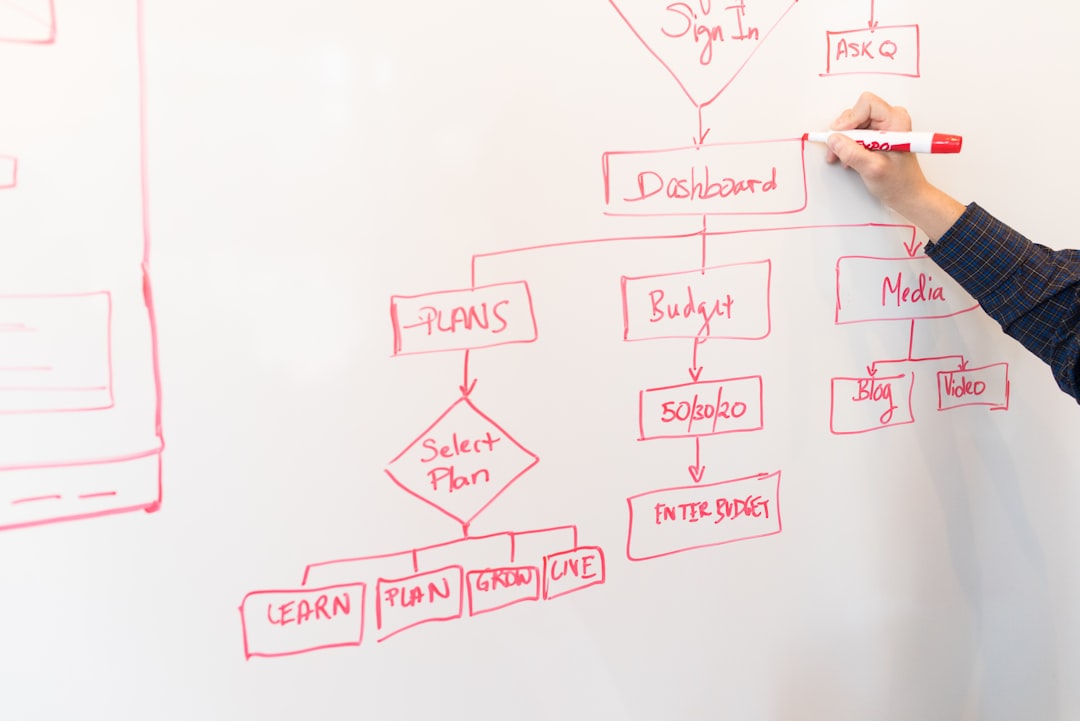

Key Considerations When Choosing a Fine-Tuning Platform

Selecting the right platform requires balancing technical, financial, and operational factors.

1. Data Privacy and Security

Organizations handling sensitive information must assess:

- Encryption standards

- Data residency requirements

- Compliance certifications

- Private deployment options

2. Scalability

As usage grows, inference demand can increase rapidly. A suitable platform should support:

- Elastic compute scaling

- Load balancing

- Global deployment infrastructure

3. Cost Efficiency

Fine-tuning can be expensive due to compute-intensive training. Parameter-efficient techniques and modular infrastructure can help control costs.

4. Customization Depth

Some use cases require light instruction tuning, while others demand deep domain retraining. Organizations should evaluate whether the platform supports:

- Full model fine-tuning

- Adapter-based tuning

- Multi-model orchestration

The Emerging Future of LLM Customization

As models continue to grow in size and capability, fine-tuning platforms are evolving alongside them. Emerging trends include:

- AutoML-driven tuning: Automated hyperparameter optimization and dataset refinement.

- Low-code interfaces: Enabling business teams to participate in customization.

- Continuous learning systems: Models that update from real-time feedback.

- Multi-modal adaptation: Fine-tuning text models alongside image and audio capabilities.

The future points toward democratization. Fine-tuning will become less about deep technical expertise and more about strategic data ownership and application design.

Ultimately, organizations that leverage fine-tuning platforms effectively gain a competitive advantage. They move beyond generic AI outputs toward systems that understand their customers, industry, and operational context in detail.

Frequently Asked Questions (FAQ)

1. What is LLM fine-tuning?

LLM fine-tuning is the process of adapting a pre-trained large language model to perform better on a specific domain, dataset, or task by training it on specialized data.

2. Is fine-tuning always necessary?

No. Some applications can rely on prompt engineering or retrieval-augmented generation. However, tasks requiring consistent domain-specific accuracy often benefit significantly from fine-tuning.

3. How much data is required for fine-tuning?

The amount varies by complexity. Small instruction-tuning tasks may require only a few thousand examples, while deep domain adaptation may require hundreds of thousands or more.

4. What is parameter-efficient fine-tuning?

Parameter-efficient methods, such as adapters or LoRA, modify only a small fraction of model weights. This reduces computational cost and storage requirements while maintaining strong performance.

5. Can fine-tuned models be updated over time?

Yes. Many platforms support continuous retraining or incremental updates, allowing models to adapt to new data and evolving business needs.

6. Are fine-tuned models secure?

Security depends on the platform and deployment model. Enterprise-grade platforms typically offer encryption, compliance certifications, and private hosting options.

7. How do organizations choose the right platform?

They should evaluate data sensitivity, infrastructure resources, scalability needs, budget constraints, and the complexity of their target use case before making a decision.