Artificial intelligence is getting smarter. But the real magic? It lies in meaning. Not just words. Not just data. Meaning.

That is where embedding model platforms come in. They help computers understand relationships between words, images, audio, and more. They turn messy information into clean numbers. And those numbers unlock powerful semantic AI applications.

TLDR: Embedding models convert words, images, and other data into numerical vectors that capture meaning. Platforms that provide these models make it easy to build semantic search, recommendation engines, chatbots, and more. Some platforms are plug-and-play, while others offer deep customization. Choosing the right one depends on your scale, budget, and technical needs.

What Is an Embedding (In Plain English)?

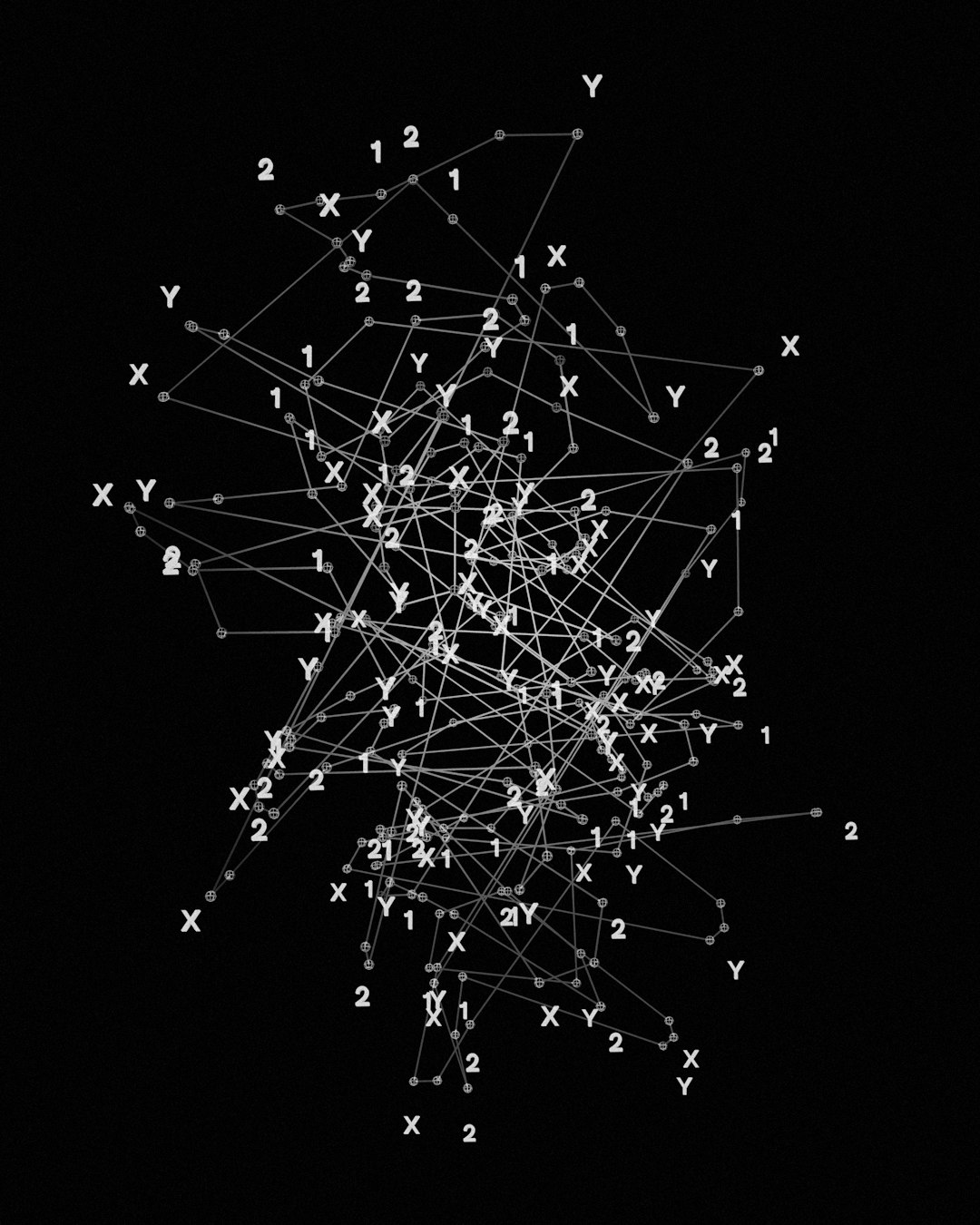

Imagine every word had GPS coordinates. Not on a map. But in a meaning space.

In this space:

- “Dog” is close to “puppy”

- “King” is near “queen”

- “Pizza” is far from “volcano”

An embedding is just a list of numbers. But those numbers represent meaning. They capture relationships.

Instead of processing raw text, your AI system processes vectors. Vectors are easier to compare. Easier to search. Easier to cluster.

That is semantic intelligence.

Why Embedding Platforms Matter

You could build embedding models from scratch. But that takes time. Data. Hardware. Expertise.

Embedding model platforms solve that problem.

They offer:

- Pre-trained models

- APIs for fast integration

- Optimization tools

- Scalable infrastructure

- Security and access controls

That means you can focus on building your product. Not training neural networks for months.

What Can You Build with Embedding Models?

Short answer? A lot.

Here are some popular use cases:

1. Semantic Search

Search by meaning, not keywords. Users type “cheap places to eat,” and your system finds “affordable restaurants nearby.”

2. Recommendation Engines

Suggest products, articles, or videos based on similarity in embedding space.

3. Chatbots and Virtual Assistants

Understand intent. Retrieve relevant documents. Power RAG (Retrieval-Augmented Generation) systems.

4. Content Clustering

Group similar documents automatically. Great for research platforms and news aggregation.

5. Fraud Detection

Spot unusual patterns. Compare transaction embeddings to normal behavior.

6. Image and Multimodal Retrieval

Search images using text. Or match text to video. That is cross-modal embedding at work.

Key Features to Look for in an Embedding Platform

Not all platforms are equal.

When evaluating one, consider:

- Model Quality: How accurate are the embeddings?

- Latency: How fast are API calls?

- Scalability: Can it handle millions of vectors?

- Customization: Can you fine-tune models?

- Multimodal Support: Text only? Or text, image, audio?

- Security: Enterprise-grade encryption and compliance?

- Pricing: Pay-per-call or subscription?

Your needs define your best option.

Popular Embedding Model Platforms

Let’s look at some widely used platforms. Each has strengths. Each has trade-offs.

1. OpenAI Embeddings API

Known for high-quality text embeddings. Easy REST API. Great documentation.

Best for startups and teams who want fast setup and reliable performance.

2. Cohere Embed

Strong focus on semantic search and enterprise AI. Offers multilingual support.

Good option for companies building customer-facing AI tools.

3. Google Vertex AI Embeddings

Deep integration with Google Cloud. Scales easily. Supports multimodal embeddings.

Ideal for teams already in the Google ecosystem.

4. Amazon Bedrock Embeddings

Works seamlessly with AWS tools. Enterprise-ready.

Perfect for AWS-heavy infrastructure stacks.

5. Hugging Face Inference Endpoints

Huge open-source model library. Customizable deployment.

Best for teams who want flexibility and control.

Comparison Chart

| Platform | Ease of Use | Customization | Multimodal | Best For |

|---|---|---|---|---|

| OpenAI | Very High | Moderate | Limited but growing | Fast product development |

| Cohere | High | Moderate | Text focused | Semantic search apps |

| Google Vertex AI | Moderate | High | Strong | Large scale enterprise |

| Amazon Bedrock | Moderate | High | Growing | AWS based systems |

| Hugging Face | Moderate | Very High | Depends on model | Custom AI workflows |

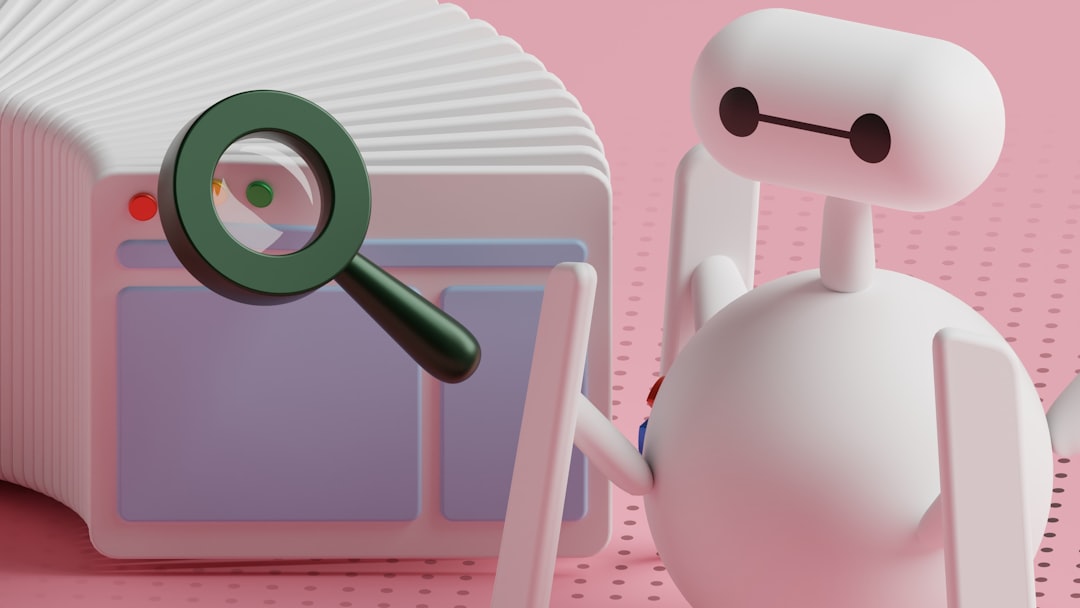

How Embeddings Power RAG Systems

RAG means Retrieval-Augmented Generation.

Here is how it works:

- User asks a question.

- The question is converted into an embedding.

- The system searches a vector database.

- It retrieves similar chunks of information.

- The language model generates an answer using that data.

This reduces hallucinations. It improves accuracy. It keeps responses grounded in real data.

Embedding platforms are the first step in that pipeline.

Don’t Forget the Vector Database

Embeddings need storage.

You do not store them in a regular database. You use a vector database.

Popular options include:

- Pinecone

- Weaviate

- Milvus

- FAISS

Embedding platforms create vectors. Vector databases search them fast.

Together, they form the backbone of semantic AI.

Choosing the Right Platform

Ask yourself a few simple questions:

- How much traffic do I expect?

- Do I need multilingual support?

- Is data privacy critical?

- Do I want full control or convenience?

- What is my budget?

If you want speed and simplicity, go with a managed API platform.

If you want full freedom, choose open models with custom deployment.

If you are enterprise-scale, cloud ecosystem alignment matters.

There is no single “best.” Only best for your scenario.

Common Mistakes to Avoid

Many teams rush into embeddings. That can lead to problems.

Avoid these mistakes:

- Using low-quality chunking: Bad chunks mean bad retrieval.

- Ignoring evaluation: Always test embedding performance.

- Overlooking cost scaling: API calls add up quickly.

- Not cleaning data: Garbage in, garbage out.

- Skipping monitoring: Track drift and quality over time.

Semantic AI is powerful. But it needs care.

The Future of Embedding Platforms

The space is moving fast.

Expect to see:

- More multimodal embeddings

- Smaller and faster models

- On-device vector generation

- Better compression techniques

- Stronger privacy features

We are entering an era where meaning becomes searchable everywhere.

Apps will understand context better. Search will feel natural. AI assistants will be more precise.

Final Thoughts

Embedding model platforms are quiet heroes.

They turn text into math. Math into similarity. Similarity into intelligence.

Without embeddings, semantic AI would not exist.

If you want smarter search, better recommendations, or accurate AI assistants, embeddings are your starting point.

Choose your platform wisely. Pair it with a strong vector database. Test often.

Then build something amazing.

Because when machines understand meaning, everything changes.