Artificial intelligence systems are typically designed with guardrails, filters, and moderation layers that limit what they can generate or how they respond. However, a growing conversation in the tech world revolves around a different concept: unfiltered AI. This term refers to AI systems that operate with minimal or no content restrictions, giving users broader access to raw model outputs. As interest in transparency, experimentation, and open-source development increases, unfiltered AI has become both a fascinating and controversial subject.

TLDR: Unfiltered AI refers to artificial intelligence systems that operate with minimal content moderation or restrictions. Unlike heavily moderated AI tools, they generate responses based primarily on their training data without layered safeguards. While this can increase creative freedom and research flexibility, it can also raise ethical, legal, and safety concerns. Understanding how unfiltered AI works requires insight into training data, model architecture, and moderation layers.

What Is Unfiltered AI?

Unfiltered AI describes artificial intelligence systems that either remove or significantly reduce the moderation rules typically built into mainstream AI platforms. Most commercial AI systems use content filters to block harmful, illegal, misleading, or inappropriate responses. In contrast, unfiltered AI models prioritize direct output generation based strictly on statistical patterns learned during training.

This does not necessarily mean the system is malicious or intentionally dangerous. Instead, it means the system operates with fewer guardrails. In practical terms, users may receive:

- Less censored answers

- More controversial or sensitive content

- Raw interpretations of prompts

- Expanded creative freedom

Unfiltered AI is often found in open-source communities, research environments, or specialized applications where control is placed in the hands of advanced users rather than platform providers.

How Standard AI Filtering Works

To understand unfiltered AI, it helps to first examine how filtered AI systems operate. Most modern AI tools rely on multiple layers:

- Base language model trained on large datasets

- Fine-tuning processes to align outputs with ethical guidelines

- Reinforcement learning from human feedback (RLHF)

- Real-time moderation filters that analyze prompts and outputs

When a user submits a query, the system does not simply generate a reply. It first evaluates the request, checks it against policy frameworks, and may rewrite or block certain outputs. The final response is shaped not only by the model’s training data but also by its alignment training and moderation rules.

Unfiltered AI typically reduces or removes one or more of these filtering steps.

How Unfiltered AI Works

At its core, unfiltered AI functions using the same transformer-based neural network architecture as standard large language models (LLMs). The difference lies in deployment and constraint settings.

1. Training Phase

Unfiltered AI models are trained on massive datasets that can include:

- Books and academic papers

- Websites and forums

- Code repositories

- Publicly accessible documents

During training, the model learns statistical relationships between words and phrases. It does not “understand” meaning the way humans do but predicts the most likely next token based on previous context.

2. Minimal Alignment Tuning

Filtered systems often undergo extensive alignment training to ensure compliance with ethical standards. Unfiltered AI may bypass or minimize this stage. This results in outputs that reflect broader patterns in the training data without additional safety rewrites.

3. Reduced Output Moderation

A defining feature of unfiltered AI is the absence or limitation of real-time moderation layers. When a user submits a question, the system responds directly using its probabilistic model, with little interference.

This makes unfiltered AI appealing for:

- Academic research

- Bias testing

- Security analysis

- Creative experimentation

Why Some Developers Prefer Unfiltered AI

There are several motivations behind the development and use of unfiltered AI systems.

Transparency

Researchers may want to observe how a base model behaves without guardrails. This allows for better study of bias, hallucination patterns, and edge cases.

Customization

Organizations sometimes prefer to implement their own moderation systems rather than relying on a third party’s restrictions. An unfiltered base model allows them to:

- Apply internal compliance rules

- Adjust cultural sensitivity standards

- Create domain-specific policies

Creative Freedom

Writers, artists, and developers may explore unconventional storytelling, speculative ideas, or controversial topics that filtered systems restrict.

Security Research

Cybersecurity professionals may use unfiltered AI to simulate adversarial conversations, test vulnerabilities, or study manipulation risks.

Risks and Concerns

While unfiltered AI offers flexibility, it also raises significant concerns.

1. Misinformation

Without moderation, AI systems may generate misleading or false information without disclaimers or corrections.

2. Harmful Content

Unrestricted outputs can include offensive, extremist, or inappropriate material depending on user prompts.

3. Legal Liability

Organizations deploying unfiltered AI must consider regulatory frameworks surrounding speech, defamation, privacy, and intellectual property.

4. Ethical Challenges

Many experts argue that removing safeguards increases the potential for misuse, harassment, or exploitation.

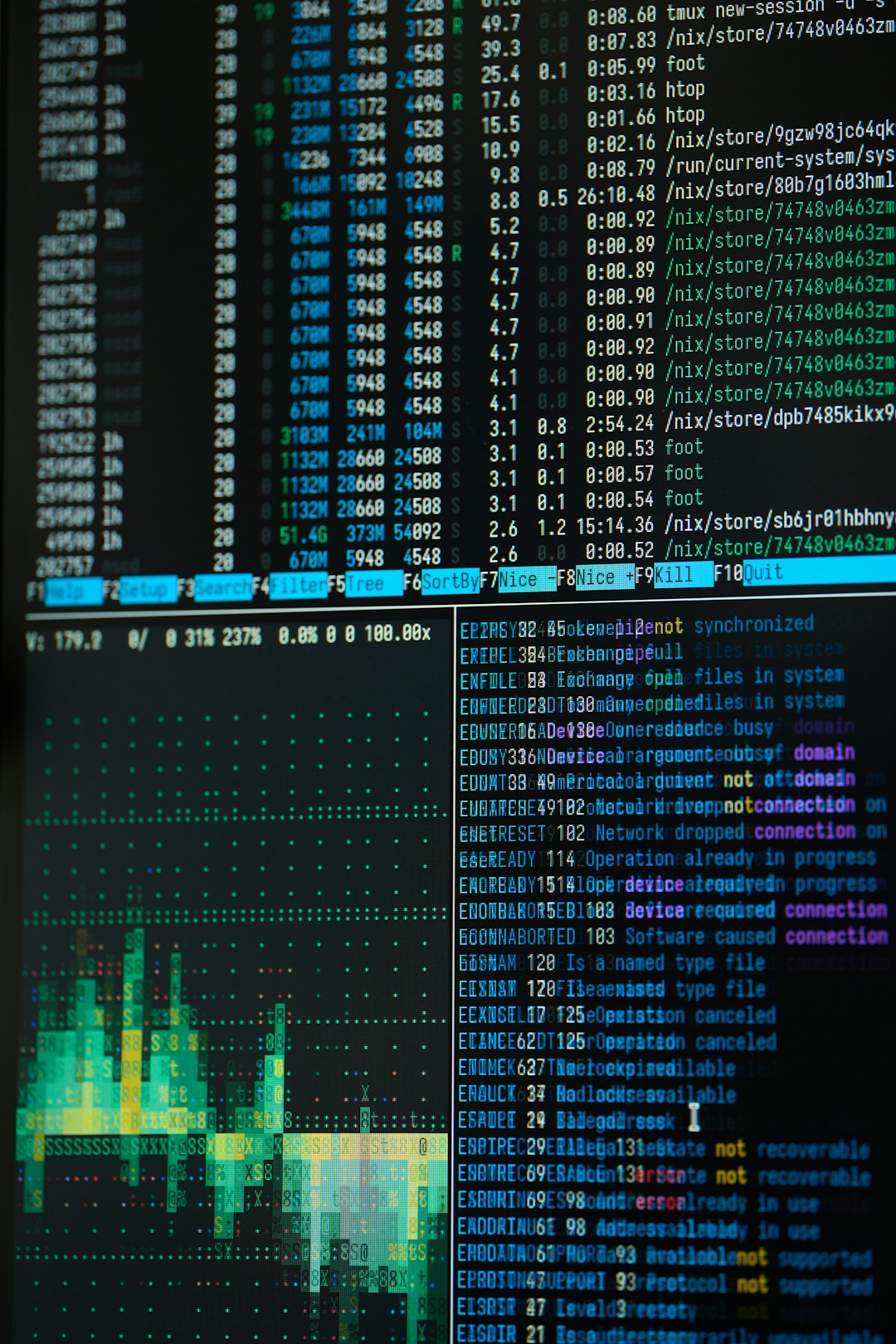

Technical Architecture Behind Unfiltered AI

Despite its name, unfiltered AI is not inherently more advanced or more primitive than filtered AI. Technically, both rely on:

- Transformer neural networks

- Attention mechanisms to weigh contextual importance

- Tokenization systems that break language into processable units

- Probability distributions for generating text

The crucial distinction lies in deployment configuration. Developers can toggle safety features, disable moderation APIs, or run open-source checkpoints locally without content restriction layers.

In some cases, unfiltered variants are created by fine-tuning models to intentionally ignore refusal patterns embedded in aligned systems.

Unfiltered AI vs. Filtered AI: Key Differences

| Feature | Filtered AI | Unfiltered AI |

|---|---|---|

| Content Moderation | Strong, multi-layered | Minimal or none |

| Alignment Training | Extensive RLHF | Limited or reduced |

| Legal Safeguards | Integrated protections | User-managed |

| Creative Flexibility | Restricted in sensitive areas | Significantly broader |

| Risk Level | Lower for public misuse | Higher without oversight |

Common Use Cases

Although controversial, unfiltered AI has legitimate applications when used responsibly.

- Academic AI research to study bias and model behavior

- Model auditing to identify systemic weaknesses

- Fiction and creative writing without narrative constraints

- Closed enterprise environments with internal safeguards

In many cases, unfiltered AI is not intended for general public deployment but rather for expert communities who understand the potential risks.

Is Unfiltered AI Truly “Unfiltered”?

The term can be misleading. No AI model is completely free from influence. Even unfiltered systems are shaped by:

- The data they were trained on

- Developer design choices

- Architectural constraints

- Hardware limitations

In reality, “unfiltered” often means less moderated at the output stage rather than entirely unbiased or unrestricted in every dimension.

The Future of Unfiltered AI

As AI regulation evolves worldwide, the future of unfiltered AI remains uncertain. Governments are increasingly focusing on transparency, accountability, and safety standards. This may result in clearer frameworks for deploying models with adjustable filtering levels.

Some experts predict a hybrid approach where base models remain accessible to researchers, while public-facing applications maintain strong moderation. Others foresee customizable moderation systems that users can tailor within regulated boundaries.

Ultimately, unfiltered AI represents an ongoing debate between openness and responsibility, innovation and oversight.

Frequently Asked Questions (FAQ)

1. Is unfiltered AI illegal?

Unfiltered AI itself is not inherently illegal. However, how it is used may violate laws concerning hate speech, harassment, misinformation, or other regulated areas depending on jurisdiction.

2. Is unfiltered AI more powerful than filtered AI?

Not necessarily. Both typically use similar core architectures. The difference lies in moderation and alignment controls rather than raw computational power.

3. Why do some developers remove filters?

Developers may remove filters for research transparency, custom enterprise moderation, creative freedom, or security testing purposes.

4. Does unfiltered AI have more bias?

It can expose more visible bias because fewer safeguards mask problematic outputs. However, all AI systems inherit biases from their training data.

5. Can unfiltered AI be made safe?

Yes, but safety must then be implemented externally. Organizations can apply their own oversight mechanisms, user access controls, and monitoring systems.

6. Is unfiltered AI available to the public?

Some open-source models are accessible to the public, but many are intended for developers or researchers who understand the technical and ethical implications.

Unfiltered AI remains a complex and evolving subject. While it offers expanded freedom and transparency, it also challenges society to balance technological openness with responsible implementation. As artificial intelligence continues to advance, discussions around filtering, moderation, and ethical design are likely to intensify.