As AI systems become more integrated into everyday workflows, the difference between mediocre and exceptional outputs often comes down to one thing: context. Crafting effective prompts is no longer just about asking the right question—it’s about designing structured environments where large language models (LLMs) can reason, remember, and respond effectively. This discipline, often referred to as context engineering, is rapidly evolving with a new generation of tools designed to help teams prototype, test, optimize, and manage AI-driven interactions at scale.

TL;DR: Context engineering tools help you design better prompts and AI workflows by structuring memory, refining instructions, testing variations, and orchestrating model behavior. These platforms reduce guesswork and make AI outputs more reliable and repeatable. From prompt testing environments to workflow automation builders, the right tools can drastically improve consistency, efficiency, and performance. If you want scalable, production-ready AI systems, context engineering tools are essential.

What Is Context Engineering?

Context engineering is the practice of deliberately designing the informational environment in which an AI model operates. Instead of relying on a single static prompt, you build layers of structured input:

- System instructions that define role and tone

- Memory layers that preserve ongoing conversation history

- Dynamic variables that personalize interactions

- External knowledge retrieval via documents or APIs

- Workflow logic that determines model behavior

Without intentional context design, AI responses can become inconsistent, forgetful, or misaligned. With strong context engineering, outputs become controlled, repeatable, and aligned with business goals.

Why Context Engineering Matters More Than Prompt Writing

Prompt writing is tactical. Context engineering is architectural.

A single clever prompt may produce impressive results in isolation. But production AI systems require:

- Consistency across thousands of queries

- Guardrails to prevent harmful output

- Integration with internal tools

- Memory retention across interactions

- Metrics and testing environments

That’s where specialized tools shine. Instead of manually editing prompts in a chat box, context engineering platforms allow you to systematically design and evaluate AI behavior.

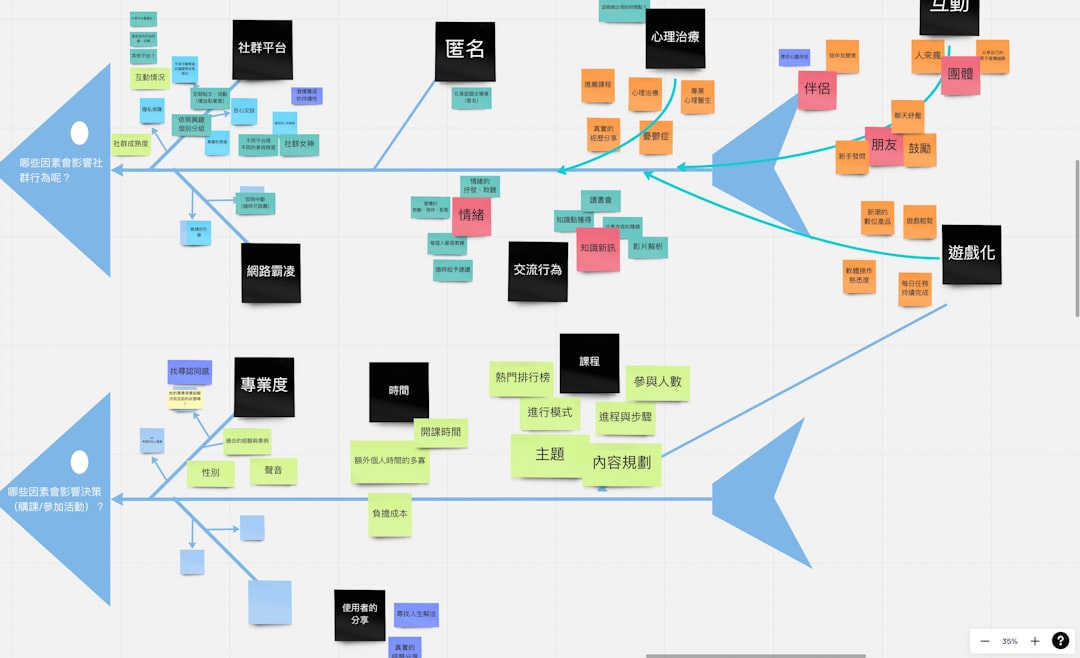

Categories of Context Engineering Tools

Modern tools fall into several functional categories:

- Prompt Testing & Versioning Platforms

- Workflow Orchestration Builders

- Memory & Retrieval Systems

- Evaluation & Monitoring Tools

- Collaboration & Prompt Libraries

Let’s explore standout examples in each category and how they help design better prompts and flows.

1. Prompt Testing & Versioning Tools

These platforms specialize in structured experimentation. Instead of guessing, you can A/B test prompts, compare outputs, and track performance over time.

Key Benefits:

- Side-by-side prompt comparisons

- Version history tracking

- Performance scoring

- Dataset-based validation

LangSmith allows teams to trace execution paths and evaluate LLM chains step by step. It’s particularly powerful when working with multi-step reasoning systems.

PromptLayer provides logging and version control for prompts, helping teams track changes and improve reliability.

Humanloop focuses on evaluation pipelines, letting you benchmark prompt performance against curated datasets.

These tools eliminate the “trial and error” chaos that often accompanies prompt development.

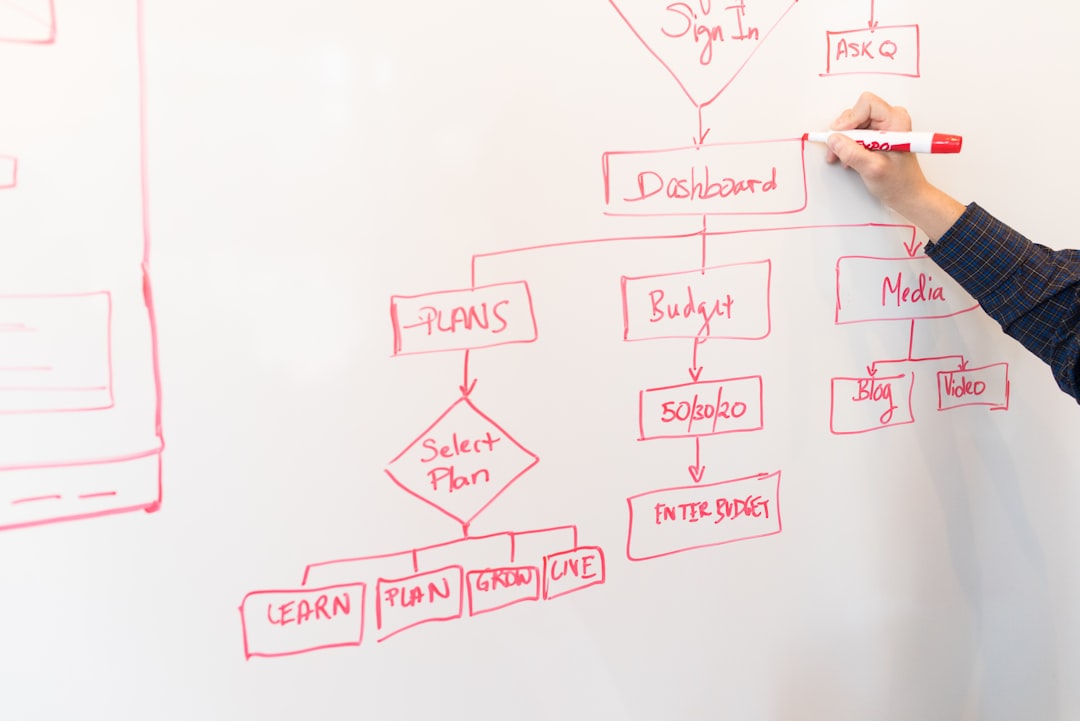

2. Workflow Orchestration Builders

As AI applications grow more complex, a single prompt rarely solves the problem. You may need:

- Conditional logic

- Document retrieval

- API integration

- Tool calling

- Fallback handling

Workflow builders provide visual or programmatic interfaces to design these flows.

LangChain enables developers to chain together prompts, memory modules, and tools into structured pipelines.

LlamaIndex focuses on retrieval-augmented generation (RAG), allowing structured interaction with large document collections.

Flowise offers a more visual, node-based builder experience for those who prefer graphical interfaces.

These orchestration tools transform AI from a reactive chatbot into a proactive system capable of multi-step reasoning and dynamic information gathering.

3. Memory & Retrieval Systems

Large language models have context window limits. Once conversations get long, important information can be forgotten. Context engineering tools solve this with structured memory.

Common Memory Approaches:

- Short-term buffer memory

- Summarized rolling memory

- Vector database retrieval

- Long-term knowledge stores

Pinecone and Weaviate act as vector databases that allow semantic search across stored documents. Instead of cramming all context into a prompt, models retrieve only what’s relevant.

Chroma offers lightweight, developer-friendly retrieval solutions ideal for prototyping.

With proper retrieval design, AI outputs become both more accurate and more efficient—drawing context dynamically instead of relying entirely on instructions.

4. Evaluation & Monitoring Platforms

One overlooked part of context engineering is measurement. How do you know your prompt is actually performing well?

Advanced tools offer:

- Automated scoring systems

- Hallucination detection

- Response time metrics

- Bias monitoring

- Human feedback loops

OpenAI Evals frameworks allow structured benchmarking against defined criteria.

Arize AI and similar observability platforms monitor model drift and output anomalies in production environments.

Without consistent monitoring, even well-designed prompts can degrade over time as models update or user behavior changes.

5. Collaboration & Prompt Management Tools

As teams grow, prompt design becomes collaborative. Version control, shared libraries, and annotations become crucial.

Effective collaboration tools provide:

- Commenting systems

- Change tracking

- Role permissions

- Shared prompt repositories

Centralized prompt hubs reduce duplicated effort and enforce style consistency across teams.

Comparison Chart of Popular Context Engineering Tools

| Tool | Primary Focus | Best For | Visual Interface | Evaluation Features |

|---|---|---|---|---|

| LangSmith | Prompt tracing and testing | Developers building LLM chains | Moderate | Strong |

| PromptLayer | Prompt logging and versioning | Teams managing iterations | Simple dashboard | Moderate |

| Humanloop | Evaluation pipelines | Structured testing workflows | Clean UI | Strong |

| LangChain | Workflow orchestration | Complex AI applications | Code-centric | Optional integrations |

| LlamaIndex | Data retrieval and RAG | Document-heavy use cases | Developer focused | Limited native |

| Pinecone | Vector database | Semantic memory storage | No-code dashboard | N/A |

| Flowise | Visual AI builder | Non-technical users | Strong visual flow | Basic |

How These Tools Improve Prompt Design

Here’s how context engineering tools directly enhance prompt quality:

1. Structured Iteration

Instead of rewriting prompts randomly, you test them against known scenarios and compare performance metrics.

2. Reduced Token Waste

Retrieval systems allow selective context loading, making prompts cleaner and more cost-efficient.

3. Explicit Role Framing

Workflow tools clarify when the model is analyzing, summarizing, critiquing, or generating—improving specificity.

4. Controlled Creativity

By layering instructions and constraints, you balance creativity with guardrails.

5. Repeatability at Scale

Version control ensures consistent behavior across thousands of interactions.

Best Practices When Using Context Engineering Tools

- Start simple. Prototype basic prompts before adding complexity.

- Log everything. Visibility improves optimization.

- Separate instructions from data. Structure inputs cleanly.

- Use evaluation benchmarks. Guesswork leads to unstable systems.

- Continuously refine. Context engineering is ongoing, not one-time.

The Future of Context Engineering

As AI systems gain longer context windows and more advanced reasoning capabilities, context engineering will shift from reactive prompt tweaks to proactive environment design. We’ll likely see:

- Automated prompt optimization

- Self-healing workflows

- Integrated model monitoring dashboards

- Hybrid human-AI evaluation loops

- Composable AI pipelines as standard infrastructure

In the near future, context engineering may become as fundamental to AI development as frontend frameworks are to web development.

Conclusion

Designing effective prompts is no longer a matter of clever phrasing—it’s about engineering the right contextual ecosystem. With specialized tools for testing, orchestration, memory management, evaluation, and collaboration, teams can design AI systems that are consistent, scalable, and strategically aligned.

Whether you’re building internal automations, customer-facing AI products, or research tools, investing in context engineering platforms pays dividends in reliability and performance. The better your context, the better your outputs—and in AI, context is everything.