Speed matters. Nobody likes waiting for an app, chatbot, or website to “think.” In the world of AI, even a one‑second delay can feel slow. That is where AI caching systems step in. They save time. They cut costs. And they make your users happy.

TLDR: AI caching systems store previously computed results so your app does not repeat the same expensive work. This improves response time and lowers infrastructure costs. From Redis to NVIDIA Triton and GPTCache, these tools make AI apps faster and smarter. If you want better performance without bigger servers, caching is your best friend.

Let’s break it down in a simple way. First, we’ll quickly explain caching. Then we’ll look at seven powerful AI caching systems. Finally, we’ll compare them side by side.

What Is AI Caching?

Caching means storing something so you can reuse it later.

Imagine asking a chatbot the same question twice. Without caching, it generates the answer both times. That takes time and computing power. With caching, the system remembers the first answer and serves it instantly the second time.

In AI systems, caching can store:

- Model responses

- Embeddings

- API calls

- Database queries

- Semantic search results

The result? Faster responses. Lower latency. Lower costs.

1. Redis

The classic speed champion.

Redis is one of the most popular in-memory data stores in the world. It is not built only for AI, but it works beautifully with AI systems.

Why developers love Redis:

- In-memory performance (extremely fast)

- Key-value storage

- Easy integration

- Works with almost any backend

For AI apps, Redis often caches:

- LLM outputs

- User session data

- Embedding vectors

If you want something stable and battle-tested, Redis is a safe bet.

2. GPTCache

Built specifically for large language models.

GPTCache is designed to cache responses from LLMs like GPT models. It reduces repeated API calls. That saves money and time.

What makes it special?

- Semantic caching

- Similarity search support

- Plugs directly into LLM workflows

Instead of matching exact text, GPTCache can match similar queries. That means even slightly different questions can reuse stored answers.

This is powerful for chatbots and customer support AI systems.

3. NVIDIA Triton Inference Server

Enterprise-level performance.

NVIDIA Triton helps deploy AI models at scale. It includes response caching to reduce repeated inference calls.

This is great for:

- Computer vision systems

- Speech recognition

- Deep learning pipelines

Triton shines in GPU-powered environments. If your AI system runs heavy models, Triton can dramatically cut response times.

It also supports multiple frameworks like:

- TensorFlow

- PyTorch

- ONNX

This flexibility makes it a strong choice for large AI teams.

4. Apache Ignite

Memory-focused computing.

Apache Ignite is an in-memory data grid. It combines caching and processing in one tool.

For AI applications, it can:

- Cache training data

- Store session results

- Accelerate real-time analytics

Ignite works well in distributed systems. That means it spreads data across multiple machines. This increases both speed and reliability.

If you need scalability and advanced data processing, Ignite is worth exploring.

5. Memcached

Simple. Lightweight. Fast.

Memcached is another popular in-memory caching system. It’s been around for years.

It is perfect for:

- Quick deployment

- Simple AI APIs

- Reducing database load

Unlike Redis, Memcached is more basic. But sometimes simple is better. If your AI app just needs quick key-value caching, Memcached does the job.

6. Varnish Cache

Speeding up AI-powered web apps.

Varnish is an HTTP reverse proxy. It caches web responses.

It is not AI-specific. But it is very useful for AI-driven platforms.

For example:

- AI content generation websites

- Recommendation engines

- Search interfaces powered by AI

Varnish can cache the final web output. This reduces repeated rendering and API calls in the background.

The result? A much faster user experience.

7. Cloudflare AI Gateway with Caching

Cloud-level AI optimization.

Cloudflare offers caching at the edge. That means responses are stored closer to users.

For AI applications, this means:

- Reduced latency worldwide

- Lower API costs

- Global scalability

Edge caching is powerful for international AI apps. Users in different countries still get fast responses.

Image not found in postmetaIt is especially useful for businesses serving global customers.

Comparison Chart: 7 AI Caching Systems

| Tool | Best For | Main Strength | Complexity | AI Specific? |

|---|---|---|---|---|

| Redis | General AI apps | Ultra-fast in-memory storage | Medium | No |

| GPTCache | LLM applications | Semantic caching | Low to Medium | Yes |

| NVIDIA Triton | Enterprise AI inference | GPU optimization | High | Yes |

| Apache Ignite | Distributed AI systems | In-memory data grid | High | No |

| Memcached | Simple AI APIs | Lightweight speed | Low | No |

| Varnish Cache | AI web platforms | HTTP response caching | Medium | No |

| Cloudflare AI Gateway | Global AI apps | Edge caching | Medium | Partially |

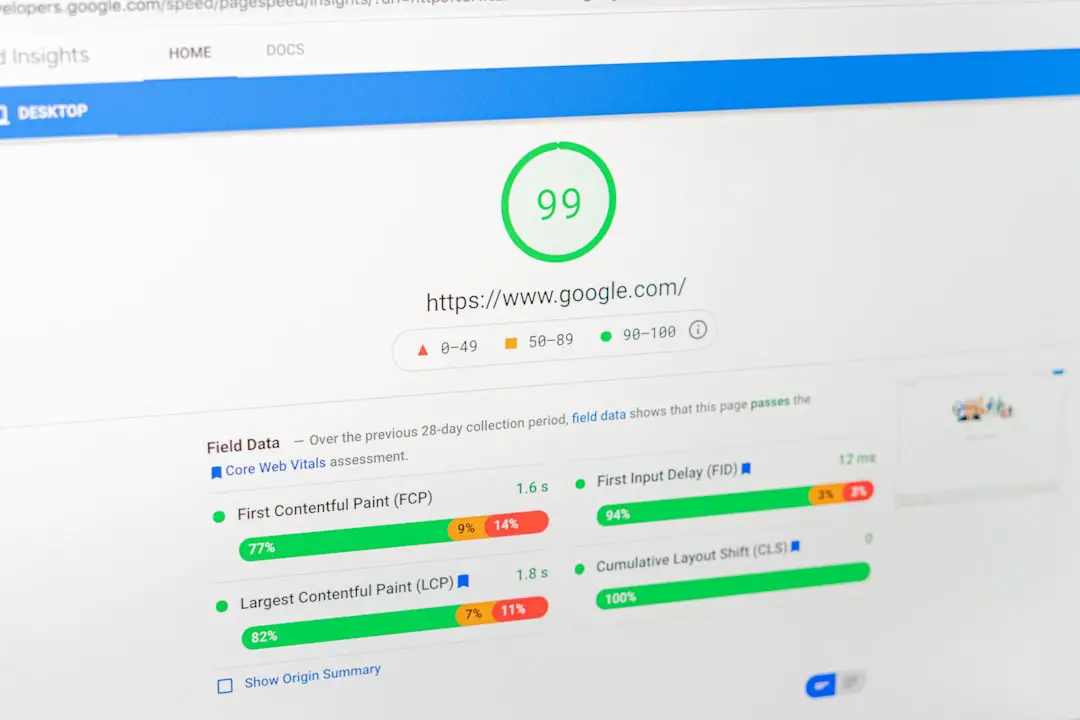

How AI Caching Improves Response Times

Let’s simplify what is happening behind the scenes.

Without caching:

- User sends request.

- Server queries AI model.

- Model processes data.

- Response is generated.

- User waits.

With caching:

- User sends request.

- System checks cache.

- Response is found.

- User gets instant answer.

See the difference?

No heavy computation. No model run. Just instant delivery.

Extra Benefits Beyond Speed

Speed is great. But caching also gives you:

- Lower cloud costs – fewer API calls

- Reduced server load – less pressure on infrastructure

- Better scalability – handle more users at once

- Improved reliability – fallback responses when systems fail

This is especially important for startups and growing AI platforms.

When Should You Use AI Caching?

Use caching when:

- Users repeat similar questions

- You use expensive LLM APIs

- Latency affects user experience

- You operate at scale

Avoid over-caching when:

- Data changes constantly

- Responses must always be unique

- Real-time precision is critical

Caching is powerful. But smart caching is even better.

Final Thoughts

AI apps are growing fast. Users expect instant answers. Businesses expect lower costs.

AI caching systems help you achieve both.

Whether you choose Redis for simplicity, GPTCache for language models, or Triton for enterprise power, the goal is the same: deliver faster AI experiences.

You do not always need bigger servers. You often just need smarter storage.

Start small. Test response times. Add caching where it matters most.

Your users will feel the difference. And they will thank you for it.